The trove of files that make up the Panama Papers is likely the largest dataset of leaked insider information in the history of journalism.

For ICIJ’s Data and Research Unit, it offered a unique set of challenges. The overall size of the data (2.6 terabytes, 11.5 million files), the variety of file types (from spreadsheets, emails and PDFs to obscure and old formats no longer in use), and the logistics of making it all securely searchable for more than 370 journalists around the world are just a few of the hurdles faced over the course of the 12 month investigation.

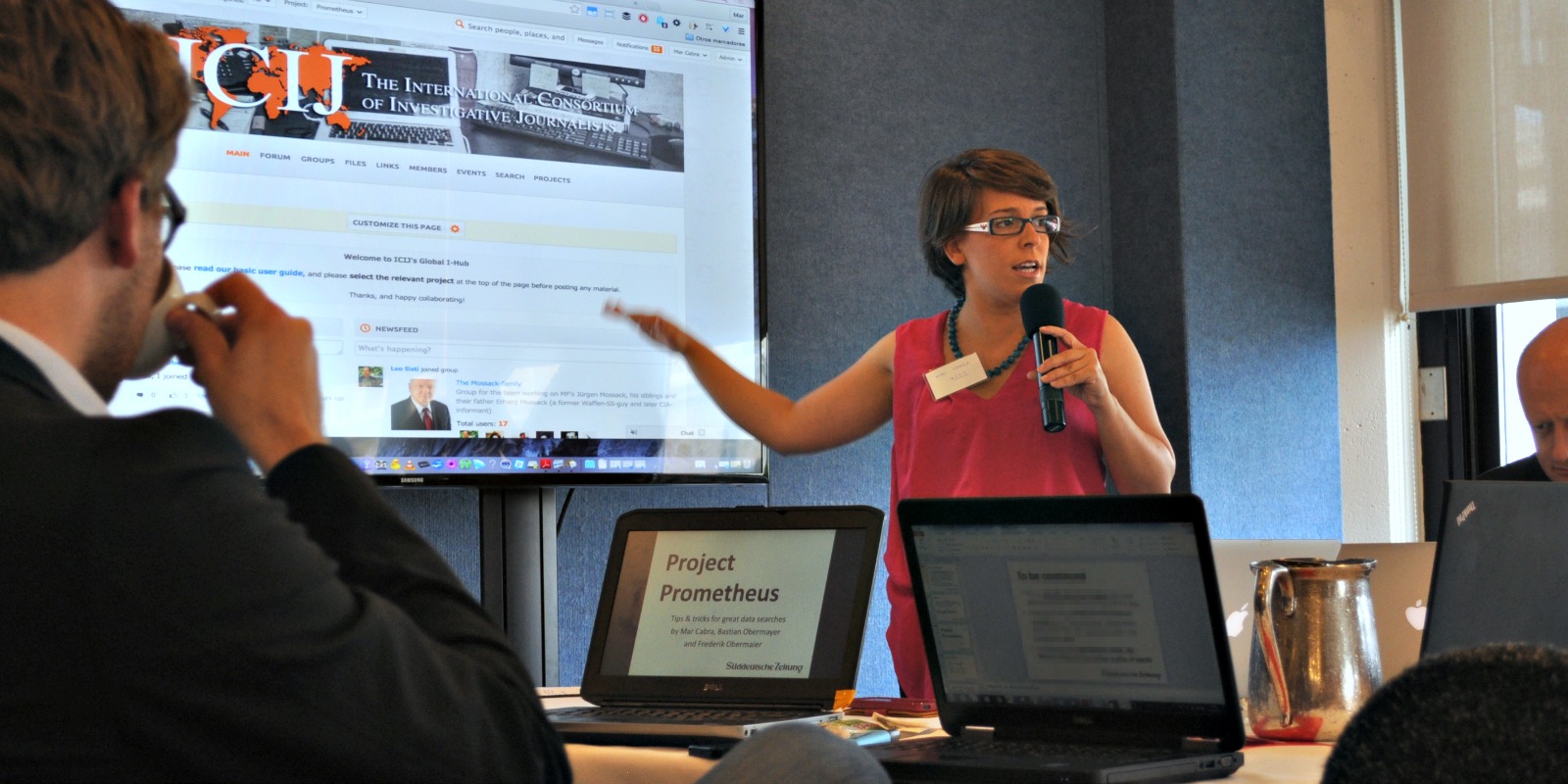

ICIJ member and data unit leader Mar Cabra recently spoke with journalism tech site Source about the people, the technology and the data journalism behind the Panama Papers. This post is republished with their kind permission.

—

So, very first thing, ICIJ has said that it will release a batch of data later this spring, but not the entire dataset—could you say a little about that, and about the way you’re timing the reporting?

The plan is that we’re actually going to keep reporting – some partners are publishing for almost two weeks for sure. Then in early May we’re going to release all the names connected to more than 200,000 offshore companies – so we’re talking about the beneficiaries, the directories, the shareholders, the intermediaries, and the addresses connected to those entities in 21 jurisdictions. We expect to have some bang around that, too.

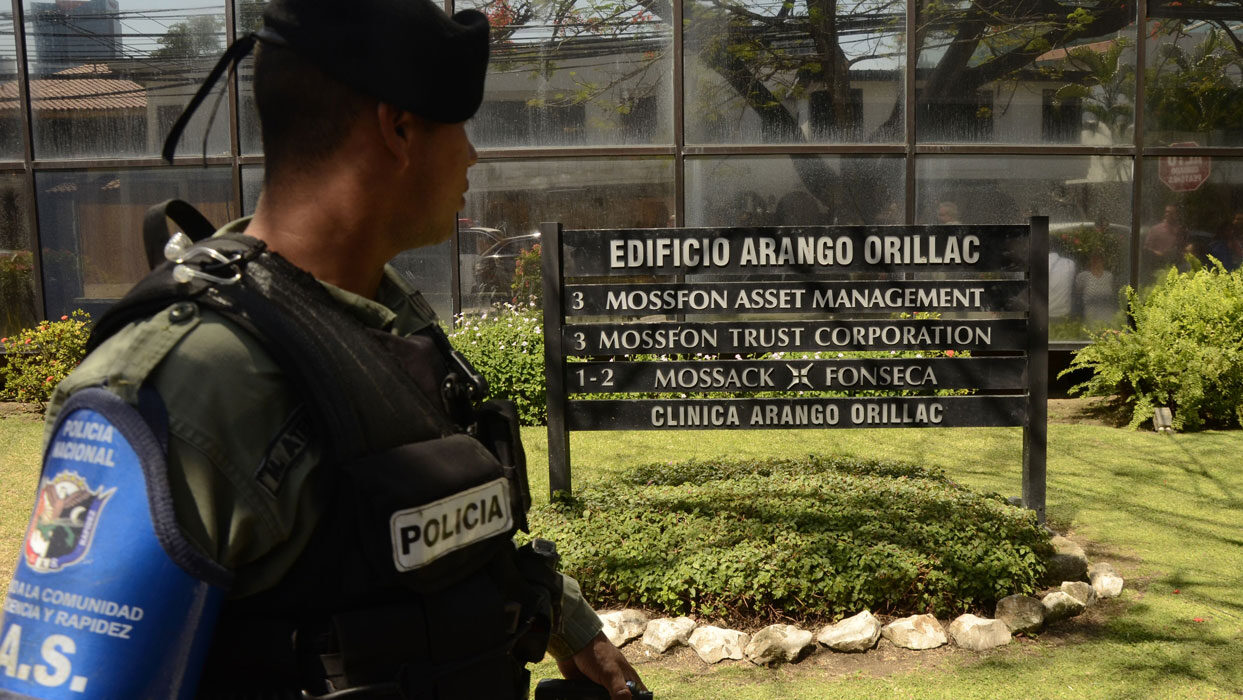

But we’re not going to release all 11.5 million files, we’re going to release the structured data, which is the internal Mossack Fonseca database. This is especially valuable because tax havens sell secrecy, and their secrecy relies mainly on the fact that corporate registries are opaque and not accessible, so we think there’s a great public value in releasing the names of companies and who’s behind them.

We already did this is June 2013 in the Offshore Leaks database that you can access right now. We had a leak then similar to what we had now — we had internal documents and data from two offshore service providers, which is basically what Mossack does. The only difference now is that this leak includes much more information and is much bigger, and the clients are high-level clients, so that’s why this leak is very important. We’re going to merge the two databases and all of them are going to be put together at the Offshore Leaks URL. You’ll be able to search what could amount to the biggest public database of offshore companies ever.

Data forensics

What was it like to work with the leaked data? What kind of processing did you have to do?

Working with this data has been challenging for many different reasons. The first reason is, it’s huge — we’re talking about 2.6TB. The second reason is that it didn’t all come at the same time; we didn’t receive a 2.6TB hard drive. We had to deal with incremental information, and we also had to deal with a lot of images. The majority of the files are emails and database files. There are also a lot of PDFs and TIFFs, so we have to do a lot of OCR-ing for millions of documents.

So first, most of the leak was unstructured data. Second, it was not easy working with the structured data. The Mossack Fonseca internal database didn’t come to us in the raw, original format, unfortunately. We had to do reverse-engineering to reconstruct the database, and connect the dots based on codes that the documents had.

We’ve had to do that with every leak we’ve received: We had to do it with Offshore Leaks in 2013, we had to do it with Swiss Leaks last year, and we had to do it again this year. Our programmer, Rigoberto Carvajal, is a true magician, because he has become an expert in reverse-engineering databases. He and Miguel Fiandor reverse-engineered the database, reconstructed the Mossack Fonseca internal files, and put it into a graphed-database format. And that’s the base of what we’re going to be doing in the new Offshore Leaks database – the improved version.

The tech stack

We believe in open source technology and try to use it as much as possible. We used Apache Solr for the indexing and Apache Tika for document processing, and it’s great because it processes dozens of different formats and it’s very powerful. Tika interacts with Tesseract, so we did the OCRing on Tesseract.

To OCR the images, we created an army of 30–40 temporary servers in Amazon that allowed us to process the documents in parallel and do parallel OCR-ing. If it was very slow, we’d increase the number of servers — if it was going fine, we would decrease because of course those servers have a cost.

Then we put the data up, but the problem with Solr was it didn’t have a user interface, so we used Project Blacklight, which is open source software normally used by librarians. We used it for the journalists. It’s simple because it allows you to do faceted search — so, for example, you can facet by the folder structure of the leak, by years, by type of file. There were more complex things — it supports queries in regular expressions, so the more advanced users were able to search for documents with a certain pattern of numbers that, for example, passports use. You could also preview and download the documents. ICIJ open-sourced the code of our document processing chain, created by our web developer Matthew Caruana Galizia.

Then we put the data up, but the problem with Solr was it didn’t have a user interface, so we used Project Blacklight, which is open source software normally used by librarians. We used it for the journalists. It’s simple because it allows you to do faceted search — so, for example, you can facet by the folder structure of the leak, by years, by type of file. There were more complex things — it supports queries in regular expressions, so the more advanced users were able to search for documents with a certain pattern of numbers that, for example, passports use. You could also preview and download the documents. ICIJ open-sourced the code of our document processing chain, created by our web developer Matthew Caruana Galizia.

We also developed a batch-searching feature. So say you were looking for politicians in your country, you just run it through the system, and you upload your list to Blacklight and you would get a CSV back saying yes, there are matches for these names — not only exact matches, but also matches based on proximity. So you would say “I want Mar Cabra proximity 2” and that would give you “Mar Cabra,” “Mar whatever Cabra,” “Cabra, Mar,” — so that was good, because very quickly journalists were able to see… I have this list of politicians and they are in the data!

For the visualization of the Mossack Fonseca internal database, we worked with another tool called Linkurious. It’s not open source, it’s licensed software, but we have an agreement with them, and they allowed us to work with it. It allows you to represent data in graphs. We had a version of Linkurious on our servers, so no one else had the data. It was pretty intuitive — journalists had to click on dots that expanded, basically, and could search the names.

We had the data in a relational database format in SQL, and thanks to ETL (Extract, Transform, and Load) software Talend, we were able to easily transform the data from SQL to Neo4j (the graph-database format we used). Once the data was transformed, it was just a matter of plugging it into Linkurious, and in a couple of minutes, you have it visualized in a networked way, so anyone can log in from anywhere in the world. That was another reason we really liked Linkurious and Neo4j — they’re very quick when representing graph data, and the visualizations were easy to understand for everybody. The not-very-tech-savvy reporter could expand the docs like magic, and more technically expert reporters and programmers could use the Neo4j query language, Cypher, to do more complex queries, like show me everybody within two degrees of separation of this person, or show me all the connected dots…

We’re already using the graphs from Linkurious and the database in the interactive The Power Players, which shows more than 70 politicians. Every time you see a graph in the interactive, that’s the database. Linkurious has a great feature, which is that you can make calls to the API, so we make calls to the API to draw the data from this new database. It also has a built-in widget feature, so if you’re using Linkurious for your reporting and you’d like a graph, you create a widget, publish the widget and embed it in your story, and it’s interactive — you can move the nodes around and display any kind of info panel… It’s great because we didn’t have to work on any of that ourselves.

We’re really happy with Linkurious — they were super supportive. Whenever we asked them questions, or asked for a feature we needed, two days later it was implemented! That communication was great, it was like having an expanded development team.

For communication, we have the Global I-Hub, which is a platform based on open source software called Oxwall. Oxwall is a social network, like Facebook, which has a wall when you log in with the latest in your network — it has forum topics, links, you can share files, and you can chat with people in real time.

Oxwall is designed for people who want to have a social network — in the form for user registration, one of the options we had to disable was “Are you looking for a male or for a female?” So… that we disabled, because of course it was a bit confusing! We repurposed it to use it for sharing and social networking around investigative reporting, and thanks to a grant from the Knight Prototype Fund, we improved the security around Oxwall and implemented two-step authentication on the I-Hub.

That was a bit of a nightmare, because some reporters didn’t quite get it, and there were a lot of problems, but we did two-step authentication using Google Authenticator. In the end, everybody got it! We were worried because we were working with journalists in developing countries, and we worried that maybe some reporters wouldn’t have a smartphone, but we were lucky and we didn’t have that problem.

Everybody was using the platform to communicate and log in every day or several times every week and share the tips and exchange ideas, and when somebody found a cross-border connection… One day a colleague of ICIJ in Spain was like, “Oh my god, I found [football player Lionel] Messi!” And everybody’s like “Oh my god, Messi!”

We knew we had things connected to FIFA and to UEFA, we knew there were soccer players, we knew that sports and offshore were intimately connected, but it’s at that point that’s it’s so useful because he says “Oh my god I found Messi!” and all of a sudden everybody has Messi and everybody’s covering Messi. The communication was very important.

A platform three years in the making

We had already used many of these platforms before. We really have three types of platforms: the Global I-Hub for communication, the combination of Solr and Blacklight, and Linkurious.

We had already used previous versions of the communication platform, but it’s not until we did the Knight Prototype that we improved its security, and this was the first time we had put it into practice. We were using Oxwall in our previous investigations — in Luxembourg Leaks, which was published in November 2014, we were already using Oxwall. But Oxwall, again — it’s a social networking platform to meet people that had to be improved. We got the Knight Prototype Fund and started work mid–2014 — it took us six months, and then a bit more than six months because in the end there are tricks you want to do. At that point, we’re at the beginning of 2015, and publishing the Swiss Leaks data and investigation. And then a year ago in April we got the call from Süddeutsche Zeitung. We were testing the platform at that time with ICIJ members, and then saw the perfect opportunity in this project to put it to work.

The other two platforms we had already used in Swiss Leaks, but we’ve improved them. The biggest problem was all the file formats that we had. Before, we had used Blacklight and Solr with all PDFs or all XLS files, but in here, you cannot imagine! There are formats I’ve never heard of, there are things you can’t even find in Google. We got around 99% of the data OCRed and indexed — I think that’s amazing given the great variety of formats that we encountered.

I think something that is very important to have in mind is that my users, who are journalists, range from the super-techie reporter who has covered the Snowden files, knows everything about encryption, and works with a great developer, to the other side of the spectrum, the very good traditional investigative reporter who has sources and is great at digging into documents and talking to people but has a hard time dealing with technology. So every tool we produce and use has to cover both fronts. We have to go for simple tools that also allow for more complex work.

The team behind the data

There’s something important to know about ICIJ: we’re a very small team. I’ve been working with ICIJ since 2011. I’m from Spain, and I studied at Columbia doing investigative reporting and data journalism there, and ICIJ hired me to come back to Spain and work here. When I started in 2011, ICIJ had a team of four people, and the team expanded or not depending on the project — we hired contractors. Back then, we didn’t have any in-house data capabilities.

After Offshore Leaks in 2013, and especially after the release of the Offshore Leaks database in June of 2013, which we did in cooperation with La Nación in Costa Rica, they had a very good data team that had two great programmers, and a great leader, Giannina Segnini. At that point, we realized, oh my god, we need to stop doing this externally! We need to have experts and developers in-house that work with us. I had been specializing on data journalism, so when we made that decision, it became evident that I was a good fit to lead the team. Giannina had left her position at La Nación to teach at Columbia, and the two programmers on her team, Rigoberto Carvajal and Matthew Caruana Galizia, came to work with us, and we started a data team at ICIJ. ICIJ today has 12 staff members and the Data and Research unit is half of the total staff. We have four developers and three journalists. Emilia Díaz Struck, a great data-oriented researcher, is the research editor, and I lead the team.

I’m not a programmer, I’m a journalist who discovered data journalism at Columbia University back in 2009, 2010 — I thought if I could tell stories in a systematic way, it was much better than telling random stories of random victims. I’ve been pushing for data journalism in Spain, and I co-created the first-ever Master’s degree in Spain on investigative reporting, data journalism, and visualization. With a colleague, I also created Jornadas Periodismo de Datos “the NICAR conference of Spain” — it’s an annual data journalism conference for around 500 people trying to learn about data. So I’m very tuned into data, and I’m a data journalist myself — not from a developer background, but I have great developers on my team.

One thing that is very important with this work is trust. So whenever we hire somebody, it has to be someone who’s been highly recommended by colleagues and people we know, because we cannot trust this data to just anybody. We have to have references from very close people when dealing with this.

An alliance built on trust

Q. So, speaking of trust, there is this central mystery to me about this project — how did you keep it secret for all this time, with so many people working on it around the world?

I have to say, I’m amazed myself that we haven’t had any major problems with this, but it makes me believe in the human race, because it’s really about trust. That’s why choosing and picking the team is so important. We need media organizations that want to collaborate, we need journalists that we can trust and that follow the agreement. Every person that joins the project needs to sign an agreement saying they’re going to respect the embargo and we’re all going to go out at the same time. And the journalists who have worked with us before know that it’s for their own benefit, to keep it quiet, because if we all publish at the same time, there’s a big bang. But if there’s a leak, it loses that power.

And you just have to look at the impact, you know? If we had not published all together at the same time in more than 100 media organizations, it would not have been the same! Here in Spain, the two media organizations we work with were amazed about the world reaction. But again, it’s all about trust.

My boss, Marina Walker Guevara, always says that this is like bringing guests to a dinner party. You need to choose the ones who are not going to cause trouble, the ones who are going to have a nice conversation. If you know that two of them don’t get along very well, you seat them on opposite sides of the table.

To me, it’s the most amazing thing — it may sound harsh, but it makes me believe in humans, in us, in our power as individuals and the power we can achieve working together. And I think that’s the way to go. There’s no way we could have analyzed 11.5 million files, 2.6 TB of information without a collaborative effort.

The future of leak reporting

Q. So what happens after the Panama Papers?

Something we’ve realized is that journalists are starting to get big collections of documents on their computers. So for example, in Argentina, journalists had all the official gazettes of Argentina and some other documents, and they have these big searchable database of documents. In Switzerland, they had the same thing — they had a lot of documents from their investigation all in one place. Right now, they have to download the documents from us and feed them into their system to see if there are connections. We want to get the platforms of the media organizations and our platforms to talk to each other and do massive matches. Right now we were only able to do targeted searches or searches through spreadsheets, and the next step is to get collections of documents talking to each other.

In parallel with the Panama Papers, ICIJ is already working on DataShare, something we presented for a Knight News Challenge grant. We didn’t get the money, so we’re funding it through other means — the Open Society Foundations have given us some money to work on it, and we’re looking for more funding.

So we’re actually working on a program that they’ll be able to install on their computers in, like, “Tabula mode,” right? So as with Tabula, you install it on your computer. It works in your browser, and it allows you to extract the entities in your documents. What you share with the network are the entities in your documents, and then our search engine basically does the matches using fuzzy matching between the entities. It tells you if there’s somebody else across the network with the same entities, and then you two have to get together and start talking to share the documents.

So we need big collections of documents to talk to each other, and we’re trying to solve that at the level of the entities, because journalists don’t want to share everything they have — they have exclusive documents. But if you create an index of entities in their documents, it’s not so much of a problem, and everybody can benefit from those matches. And of course, we’re having a lot of headaches with natural language processing, and that’s something we’re dealing with inside the Panama Papers project as well.

Q. Do you already have your next data project in view?

Yes! We have a team working on the next project, and scoping it — we have a few themes and we’re seeing which of the themes is the next one.